Julian Assange

631 weeks of deprivation of liberty for telling the truth

631 weeks of deprivation of liberty for telling the truth

Illustré par :

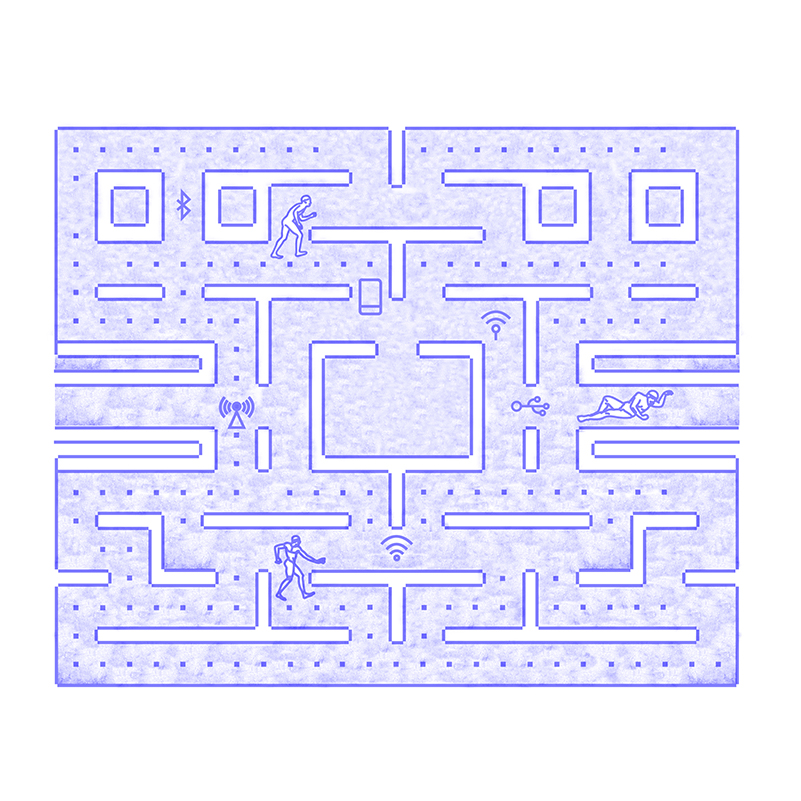

Philosophy does not have a good press. An academic discipline producing ethereal intellectuals who have lost all common sense, it struggles to anchor its discussions in everyday life. Sometimes, however, one can rejoice in the political testing of the philosophical ideal. One attends then, inevitably, the overtaking of the ideology by the pure thought. The recent universal infatuation with digital technology and its « philosophy » is proof of this. Where are we now?

Whatever the problem, the solution is now digital, i.e. smart, a word that originally translates, depending on the context, as chic, elegant, intelligent, clever, sharp, prompt… Smart people are the elite, the cream, the « high »; « smart » objects are therefore a » must » if you don’t want to look as impoverished as you are. Digital being smart, we should no longer ask ourselves whether we should digitize things, institutions, or humans. The answer is, in fact, immediate: not only do we have no choice, but the urgency is great. We have no choice because it is progress and this progress materializes, for those who accept it, by real wages and remarkable profits, while those who refuse it lose their jobs, go bankrupt and commit suicide(at least socially). It is urgent, because great rival powers are throwing themselves into the adventure and, as you may have guessed, we are already very late. The rhetoric of delay is irresistible: the decision has already been taken by those smarter than us; we have so far lacked vision and, above all, pragmatism; it is therefore time to get down to business. Things, institutions and people must pass under its forks. Let’s note, moreover, the triple conjunction of two arguments: on the one hand, digital technology allows to optimize everything; on the other hand, digital technology allows to monetize everything. We would like to add, without superfluous oratorical precautions, that it allows to secure things, institutions and citizens, but it is not difficult to understand that its development has been accompanied by an omnipresent cyber-surveillance (although it goes largely unnoticed) and by a cyber-criminality just as promising in terms of immediate profits.

This being the case, it would be unreasonable to worry about employment, because, as we do not know enough, creative destruction (Schumpeter, 1942) is always at work in our societies of full employment (which ignore themselves) and productivity gains will allow the creation of more value and (slightly) more jobs than will be destroyed. The demonstration of this oxymoron is however all the more hazardous since the goal of robotics is to create a perfect humanoid simulacrum. But, perhaps, we should conclude that this simulacrum will be a great nostalgic and will be happy to hire humans to take over the inevitable ancillary tasks? In such a case, this would make him a volunteer therapist, as the mental health of his employees will be borderline. (François Roustang suggests translating borderline by « borderline(1) »). Or else, created by schizophrenics, it can only be psychotic « that itself »? Let’s clarify the issues in order to try to untangle the skein.

Digitizing things has been on the way since computers became domestic. In 1984, Apple released the Macintosh, which heralded the rapid spread of personal computers. The multimedia universe will develop towards the end of the 1980s with the appearance of CD-ROMs and the possibility of managing different media simultaneously (music, sound, image, video). The Web, or World Wide Web, was born in 1991 (its direct military ancestor, the ARPANET, dates from 1969); it seems to announce the decline of the book and the forced march towards the digitization of all media. The flexibility of the interactivity of the Web, and especially its faculty to give the word to all (which requires nevertheless a familiarity with the computer tool, the access to a computer connected to a high speed line and a reliable electricity supplier) could however be called into question at the time of the passage to the Web 3.0: under pretext of the exhaustion of the addresses (« IPv4 »), of the fight against fake news, pornography, etc., it would be a question of controlling the content of the sites; under the pretext of saving the professional press, etc., the canvas would become much more expensive. Mobile telephony was introduced in Europe in 1994 and has quickly become an indispensable part of most consumers’ daily lives. The conclusion is simple: with the explosion of the use of the Web and the increase of the advertising pressure, we are flooded with information less and less useful and more and more teleguided by media and social networks « at the order(2) « .

Digitizing institutions is therefore self-evident: as things become digitalized and multimedia becomes more widespread, institutions have been able to engage and redefine the terms of their good governance. Not only the internal management of companies and public organizations, but also the relations they have with their customers and citizens are systematically digitized, processed and archived through networks. Except for the military and sensitive research companies (and even then), they no longer use an intranet in the sense that a local server (often from the VAX-11 family) would manage a local network, but rather it is virtualized by restricting the Internet through a firewall . The intranet is now on the internet (the « cloud » and the NSA) and we can sleep easy in terms of privacy.

Finally, digitizing — and monetizing — humans themselves is the work of the 21st century. Such is, fundamentally, the project of transhumanism; it consists in implanting technology(hardware and software) in the organism in order to cure a handicap, to improve the physical and mental performances, or even to immortalize the subject, all amazed to have been able to offer himself what his neighbor still tries to finance. Everything seems possible to those who cling to the technical faith. It has taken two distinct but converging paths: artificial intelligence (AI) and robotics. Indeed, cognitive psychologists have finally put forward the bold thesis ofembodiedcognition((3)): we only know and are conscious through our inscription in space and time. This means two things: on the one hand, AI will not make significant progress until it has a « body »; on the other hand, robots cannot hope to be autonomous without having some semblance of intelligence (which of course remains to be defined). Skeptics will be reassured to learn that, in the 1960s, the computer was seen as a kind of brain (cybernetics: » to think is to compute »), whereas nowadays we understand the brain as a kind of computer (cognitive science: » to compute is to think(4) »). There remains the question, painful among all, of emotions. As in blackjack, researchers double down here: the end of the twentieth century is indeed characterized by what some have called the « affective turn »(5)of Western thought. However, it is not a question, for example with Antonio Damasio (1994), of claiming that emotions, as emotions, are at the heart of human experience, that they designate the very mystery of existence, but rather of showing, with clinical vignettes and PET scans to support it, that the effective exercise of thought requires an emotional contribution. So much so that for the cognitivists Minsky and Picard(6), » the question is not whether intelligent machines can experience emotions, but whether there can be intelligent machines that would not have any(7) ». Based on their respective clinical expertise, they emphasize the essential role that emotions play in decision making, perception, learning and thinking. It was known that emotional people do not make good decision-makers; it remained to be discovered that, without emotion, nothing can be decided. In Platonic terms: although the ideal seems to be to unhitch the horses named desire and emotion in order to let thought think for itself, in an autarky that got a name in 1943 — autism (8)- without emotion and desire, reason simply goes nowhere. Picard therefore writes that » if we want to create truly intelligent computers that are capable of interacting naturally with us, we must give them the ability to recognize, understand, and even experience and express emotions(9) ». We obtain, it seems, a paradox of the style » be spontaneous » applied to an algorithm… Here we reach a fork in the road. Either one accepts to put emotion at the center of human experience (or even, like Whitehead, at the center of all experience, whatever it is) and the constitutive opacity of existence is recognized as such; or one tries to integrate emotion into the materialist framework, possibly reformed by the latest quantum speculations, and nothing escapes reason. The stakes are high: if the world and our experience of it were to be totally transparent to reason, determinism would definitively evacuate all forms of spontaneity and, with it, all existential meaning. We can see that the question is no longer only philosophical: it becomes central in the framework of the AI development project and it has always been so in psychiatry. Since Emmanuel Peterfreund (1971) and especially John Bowlby (1969), computational theories of emotion have seduced many psychiatrists, with Kenneth Colby going so far as to design a paranoia simulator(10). Would he have given up modelling the reasonable to prefer, from the start, the improbable algorithm of madness?

This is the context in which some people believe they can write with the greatest seriousness in the world that the development of AI will also allow the treatment of illnesses classified as psychiatric: » This is the case, for example, with autism or attention deficit disorder with or without hyperactivity. Some robots can be used to improve the emotional development of these people and teach them to channel their energy(11). « The automaton in question is Pepper, an android (humanoid robot) created by SoftBank Robotics after its acquisition of pioneer Aldebaran Robotics; it was introduced at a conference on June 5, 2014, and has been on sale since 2015. It is a « companion robot », which » is designed to integrate into homes, recognize family members and adapt to each user. He is able to hold conversations and entertain children(12) ». Its specificity is the detection of the facial expressions, the tone and the particular lexical field used by its « interlocutor ». So we should not be surprised that in the long run, this robot could » replace some of the positions held by human beings until now. […] The future is more than ever on the move…(13) « .

Look carefully at the general reasoning: on the one hand, this type of robot should replace a whole series of functions that are still, at present, performed by humans; on the other hand, there is no danger in the house because the robotic revolution is expected to create jobs. Which ones? Those that require, precisely, faculties that are only found in humans, such as the ability to recognize emotions (empathy) and to adapt to them (sympathy). To put it more clearly: robots should replace most of the professions requiring hard skills, without directly affecting those requiring soft skills. But the robotic logic itself (« embodied cognitition » + » computational theories of emotion ») pragmatically demands — there can be no question of ideology — the colonization of the territory of soft skills. If the reader does not understand how this double constraint can be overcome, it is probably because of its impossibility. The particular reasoning is equally edifying: while there is no consensus on the exact nature of autism or on the etiology of attention disorders, robotics would be able to help patients. What human contact cannot achieve, interaction with the machine will. The only possible conclusion was drawn by La Mettrie in 1747: Man is a machine. The fact that schizophrenics understand themselves as machines can only support this thesis. Let’s get our thoughts in order.

First, why is it autism that is mentioned here rather than schizophrenia? Unless I am mistaken, since Kanner (1943), the autistic person is the one who is born schizophrenic and the fundamental question remains to understand schizophrenia itself and to identify its causes (organic/genetic and/or psychological/traumatic). The fact that autistic people are not necessarily institutionalized and that they are sometimes associated with gifted people (Asperger’s) probably explains their appearance in the argument. Second, what can be said about schizophrenia itself? First of all, it is important to know that there may be no clear symptoms (outward signs) that signal the schizophrenic state. Secondly, the reading grid that offers the most comparative advantages is that of Ronald D. Laing. In The Divided Self (1960)(14), he explains that, faced with a real perceived as threatening, the subject, desperately seeking to protect himself, reinforces as best he can his natural and cultural defenses. It operates first of all with the help of strategies for avoiding reality: amnesia of traumatic events, anesthesia of pain, psychosomatic conversion (hysteria), phobias, addictions, drug addiction, de-socialization, withdrawal, mutism, depersonalization, dissociation. Sometimes tensions are released as a result, but the process leaves a disaffected and undesirable human: with no (or as little as possible) affect and no apparent desires (other than the will to live hidden). Often, anxiety sets in and a new strategy is put in place to simply survive. Risky behaviors, as well as self-destructive behaviors, such as self-harm, sexual promiscuity, and suicide attempts, gradually become inevitable. Sometimes one can track the increment of the paranoia; sometimes the changeover is sudden and definitive. Let us not forget that etymologically schize means cut. In short, once the protective strategy has been locked in, the subject becomes a prisoner of his or her own security device and « role play ». An insecure or anxious person is likely to develop two complementary sets of defenses: on the one hand, his or her self-consciousness withdraws into a totally inaccessible « inner self »; on the other hand, the « inner self/false self » system is separated from the body and the latter from the world: as a result, perceptions are truncated and actions are futile. To be free is then to be inaccessible, among other things, to the gaze; it is to have become totally opaque. By understanding psychosis as a desperate attempt to preserve one’s psychic integrity, Laing envisions schizophrenia as both a radical communicative deficit — a breakdown - and an intrapsychic journey — a breakthrough - with a soteriological purpose. More precisely: he considers schizophrenia as a « passage » towards a more authentic existence, as an initiatory, transformative journey(15). The dramatic richness of this strategy only makes an existential and humanistic approach more urgent.

Third, to clarify the issues, it is important to introduce a new clinical category: sociopathy. Unlike the schizophrenic, the sociopath acts in the world in a rationally interpretable, algorithmic way, which does not mean, however, that it can be fully anticipated. The passion and the desire, if they exist in him, belong to a deep self; only a cold, machine-like rationality expresses itself. (We could take up large parts of Guattari and Deleuze’s analysis here, replacing « schizophrenia » by « sociopathy »). One hardly detects any traces of delirium or any sign of irrational thinking in him, but one will not discover either affects (and thus empathy, remorse and shame) or desires (and thus enjoyment in the present moment). The sociopath probably doesn’t dream; his life unfolds like a program that no one has written and to which he clings like a raft. He may have difficulty following the details of such a life plan. As a result, he is forced to mimic affects and desires in order to mask his disability. When confronted by a perceptive observer, the sociopath will appear in the bland light of superficial charm, chronic falsehood and hypocrisy. In short, the sociopath pretends to have, manifest and identify emotions that he or she does not have. Through a long process of trial and error, he can deceive his interlocutors. Don’t we find here, very precisely, the portrait-robot of our high-end androids?

In order not to weigh down the argument, nothing will be said about hyperactivity, the primary cause of which is clearly, despite what recent specialists have said(16), a healthy lifestyle and the massification of education. What to conclude? To believe that autism can be the object of a machine therapy is characteristic of the technophile sociopath. When the argument is extended to the digitization of education, it only makes this feeling even more acute. Let’s follow the thread: hard skills are quantifiable competencies, which can be evaluated objectively and lead to a diploma; soft skills are more transversal competencies, such as listening, pedagogy, empathy, adaptability, creativity, stress management(17)… These soft skills must be instilled, not out of humanism, but in order to be employable, i.e. to be able to constantly improve the products, processes or services for which one is responsible. They can be defined in extension(18) critical thinking and inductive skills, international collaboration, the ability to leadership, flexibility and adaptability to technological changes, initiative and entrepreneurship, oral and written communication, ability to access information and analyze its relevance and accuracy, curiosity and imagination, empathy (reflective, emotional, cognitive), creativity. On closer inspection, however, it is difficult to see how such so-called skills can be taught; at most, a climate can be created that is conducive to individual growth. At the very least, it is a matter of creating an atmosphere that allows for both individuation and solidarity; a maxima, more demanding practices can be invoked, but they are of the order of a free asceticism, not of a paying curriculum(19). All this does not mean, of course, that it is forbidden to sell vanity and emptiness, as long as they are spectacular.

The school and academic ethos is simple: the school must take care of the employability of the pupils and the university that of the students, which means that, in an increasingly automated environment, « soft » skills remain as important as « hard » skills, that the competition of pupils (students) is still relevant, and that the competition of the schools (universities) among themselves, and of the teachers within them, must also be promoted in a very democratic concern for transparency. More than ever, the pure and perfect competition market is defined by the atomicity of supply and demand, the homogeneity of the product, the transparency of the market and perfect mobility. The privatized school (university) will thus have to grapple with these ideological (and dystopian) axioms: atomicity of supply and demand (each agent is a drop of water in the sea of the market); homogeneity of the product (there are distinct markets for each type of product); transparency of the market (perfect information of the agents); perfect mobility (free entry, free exit and absence of obstacles to the circulation of the production factors)(20).

We know that we must aim for excellence, whether we find the robotic contradiction or not. If the use of ICT digitizes the mind without being able to liberate its humanity, the exact opposite is claimed, especially because of the theoretical return of tutoring and preceptorship. To put it plainly: the chronic ineffectiveness of mass education is now recognized — except, of course, that historically, public education has been used to make the masses docile and to repress the hydra of communism (c. 1870), to reinforce patriotism in a colonialist context (c. 1880), to make up for the professional deficit after the last world hecatomb (c. 1946), to reduce education to the status of a certifying commodity (c. 1979), to participate in the knowledge economy (c. 1999; i.e., « Knowledge economy » of the usual World Bank, European Union, OECD), and, finally, to bifurcate first-class jobs with downgraded ones (c. 2016, i.e., « Mc & Mac jobs »). Specifically, the imperative ofcontinuing education andlifelong learning — please appreciate the difference — may translate into the fundamental irrelevance of acquired training, even if it is academic. Any diploma must be perceived as obsolescent (technical, functional, and programmed), while the worker is supposed to be unable to adapt by himself, and his professional environment is judged unable to train him or prefers to subcontract…

Let’s summarize. What should the school look for? Profit. How do you do it? By developing a training offer that corresponds very precisely to the demand of companies thanks to a real « human resources » department using the latest psychometric tools and adequate salary incentives. It will then attract the best students and be able to afford the best teachers, the ones whose career plan is to earn $1.5 million a year and drive around in a Lamborghini with a Dick license plate(21). What do companies ask for? The hard digital version, i.e. advanced computer skills, and the soft digital version, i.e. entrepreneurial and managerial interpersonal skills and leadership that enable the optimization of the machinization of life. Can we have faith in the destruction that creates jobs and meaning? Even if, in the past, there may have been examples of such a paradoxical intercession, the very idea of replacing human workstations, hard and soft, by algorithms and droids definitely falsifies it.

Similarly, if you ask the question about the impact of digitization on education, you get a resounding condemnation. The creation of soft jobs and the preservation of an entire faculty alienated by its Orwellian liberalization can only be a necessary temporary measure from the perspective of universal robotization. Promoting digital education is equivalent to considering schizophrenia as a skill to be developed without further ado. Before becoming machines, we will start by thinking machine. Unfortunately, this transformation will never be complete, and we will have to resolve ourselves to a form of schize between our contingent existential palpitations and the necessary interface with the clinically sociopathic droid.

Michel Weber